Data science has proven to be a privilege for IT companies and business industries. The innovations include acquired data value from the information available, understanding data patterns. The aftermath includes anticipating or producing the results from the data obtained. Data analysts and scientists in businesses and research areas while informing their decisions. The big data analytics tools help the companies to understand better their customers, evaluation of the advertisement campaigns, creation of content strategies, development of products.

The data obtained may include new information collected from new initiatives as well as historical data from different line-ups. Data collection from customers is termed as first-party data. Whereas, the second-party data involves collecting data from known organizations. The third-party data, on the other hand, integrated data bought from any marketplace by the company.

An Insight into the Big Data Analytics Tools

’Data analytics’ refers to the process of inspecting datasets and concluding from the information obtained. The techniques enable the conversion of raw data and uncovered patterns and extraction of valuable insights from it. The data analytics techniques use specialized software and systems, complex algorithmic integrations taken from machine learning, automation, and other capabilities.

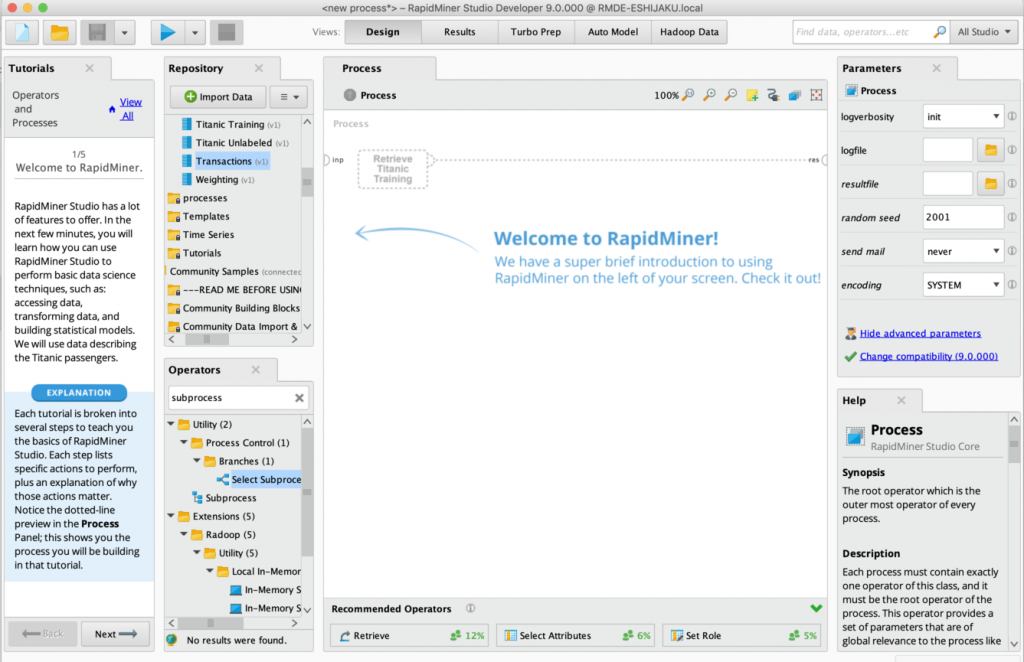

1. RapidMiner

RapidMiner is a data analytic software, developed in 2006. Previously known as YALE, or, Yet Another Learning Environment, it’s development took place with the help of Ralf Klinkenberg, Ingo Mierswa, and Simon Fischer at the Technical University of Dortmund. This data analytic software uses a client or server model. The server here is given by private or public infrastructures or on-premise.

RapidMiner offers data mining and machine learning processes, including, data processing and visualization, data processing and transformation, predictive analytics, deployment, statistical evaluation, and modelling. Its extension is possible using R and Python scripts. This data analytic tool has an open-source Java core. It offers the convenience of frontline data science tools and algorithms. Integration with API and cloud is noteworthy.

The products of RapidMiner are Studio, GO, Server, Real-time scoring, and Radoop. The trusted companies using this big data analytics software are Hewlett Packard Enterprise, BMW, Safoni for processing data, and machine learning models. The new launch of RapidMiner 9.6 extended the platforms to BI users and full-time coders. The features of this launch are an end-to-end Data Science platform, enabling machine learning, machine operations, and data processing.

The small enterprises must pay $2500 per user, a year. The medium or growing enterprises need to pay $5000 a user per year. The large enterprise subscription is $10,000 per user, a year. However, this big data software must improve its online services to users.

2. Xplenty

Xplenty is an extract, transform, load platform built by Yaniv Mor in 2012, requiring neither coding nor deployment. The click-and-point interface allows simple data integration, processing, and preparation, connecting to a variety of data sources. This big data analytics software has all the capabilities to perform data analytics. Since its release in 2012, XPlenty has helped thousands of users to organize data and get insights alongside it.

The data integration platform of this data analytic software comes with a complete toolkit for designing and installing data integration processes. From data replication to data preparation, the simple interface allows job scheduling, tracking job process and status, sampling data outputs, and executing both UI and API. It allows data integration of some well-known data stores and SaaS applications, some of them being Amazon Aurora, Oracle, Secure File Transfer Protocol, Asana, Salesforce, Basecamp.

Marketing Analytics Solution increases conversions and improves marketing strategies working in four ways. The data enrichment tools increase customer information, ensuring marketing automation is up to date. Targeted communication allows the transfer of data to customer relationship management technology. It helps to personalize the user experience by connecting to the marketing data.

Common integrations to this data analytic tool are Salesforce, Facebook Ads, HubSpot, Google AdWords. This big data analytics tool provides an insight into the customer lifecycle stages. Centralizing sales and metric tools helps to ignore duplicate and out-of-date information. As a result, this helps to gain a better understanding of prospective customers’ interests or leads. After a 7day free trial, the subscription-based pricing plan starts.

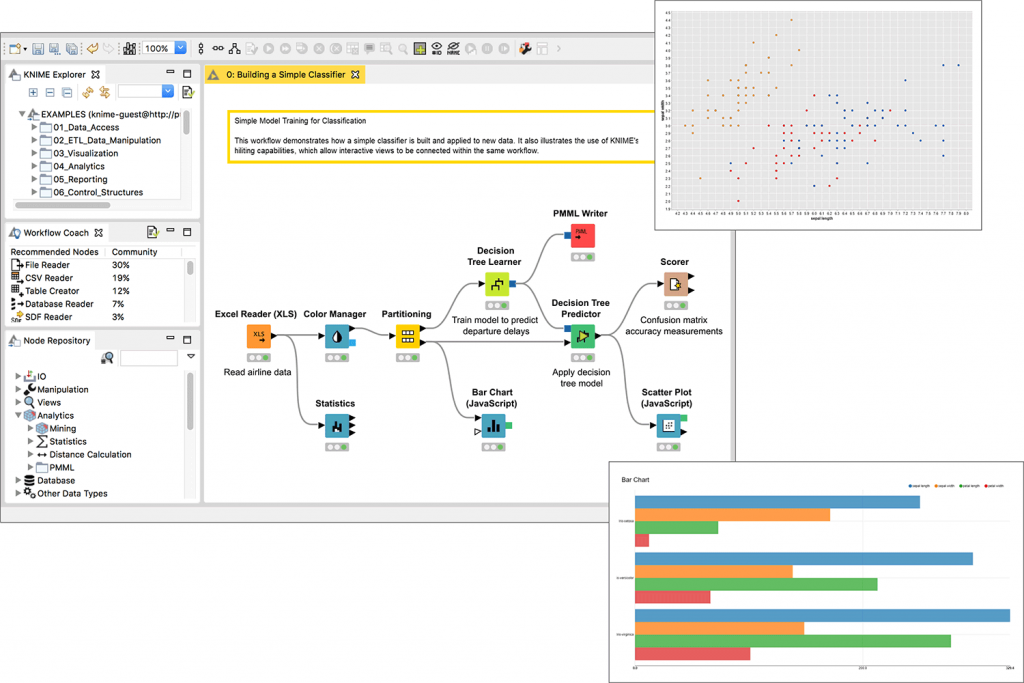

3. KNIME

KNIME or Konstanz Information Miner started its journey in 2004, headed by Michael Berthold. It is an open-source data analytics software used for Enterprise reporting, integrating, research, data analytic, data and text mining, and business intelligence.

KNIME follows the concept of a modular data pipeline, which allows data mining with a user interface. Pre data processing, visualization, modelling, analysis is enabled within KNIME.

The two setups for workflow, batch mode, and user interface allow local job management and regular execution of the process. One of the key features of this big data analytics software is its scalability. The robust extensions allow companies to grow in different desired directions.

This data analytic program needs to work on its data handling capacities. It could have allowed integration with group databases.

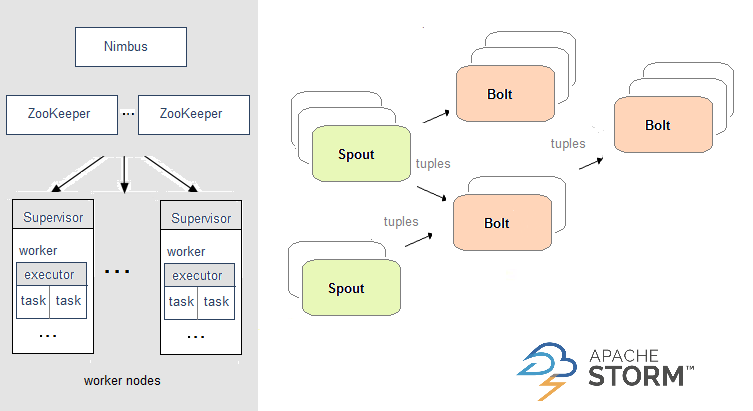

4. Storm

Apache Storm is a new addition to big data analytics software created by Nathan Marz and developed by Backtype and Twitter. Its stable release took place in 2020. This data analytic tool is a cross-platform, fault-tolerant real-time computational framework, and distributed stream processing.

The big data analytics software has reliable scaling, guarantees swift data processing, real-time analytics, ETL computation, distributed RPC, continuous and log processing, and machine learning. This tool is not easy to learn and use. Its natives Nimbus and Native Scheduler become bottlenecks that detriment processing. Nevertheless, this big data analytics tool is free to use.

5. Talend

Fabrice Bonan and Bertrand Diard are the founders of Talend. It came into the world of data analytics in 2005. Based on the cloud, it is leading data integration today.

Open Studio for big data is present under the free source license and free of cost. The connectors and components Hadoop and NoSQL, solely providing computer support. The user-based license subscription has a big data platform included with it.

This provides email and phone support: data processing and integration work in real-time. Customization solutions are present as per the need of the customers, with the help of diverse providers under the same roof.

Like any other data analytics tool, this tool also has certain disadvantages. The program should do better at community support, improving the user interface, and reducing the difficulty in adding custom components to the palette. The product, Open Studio is free for big data, and the rest, subscription-based flexible cost options are available. The average cost may range up to $5000 per user a year.

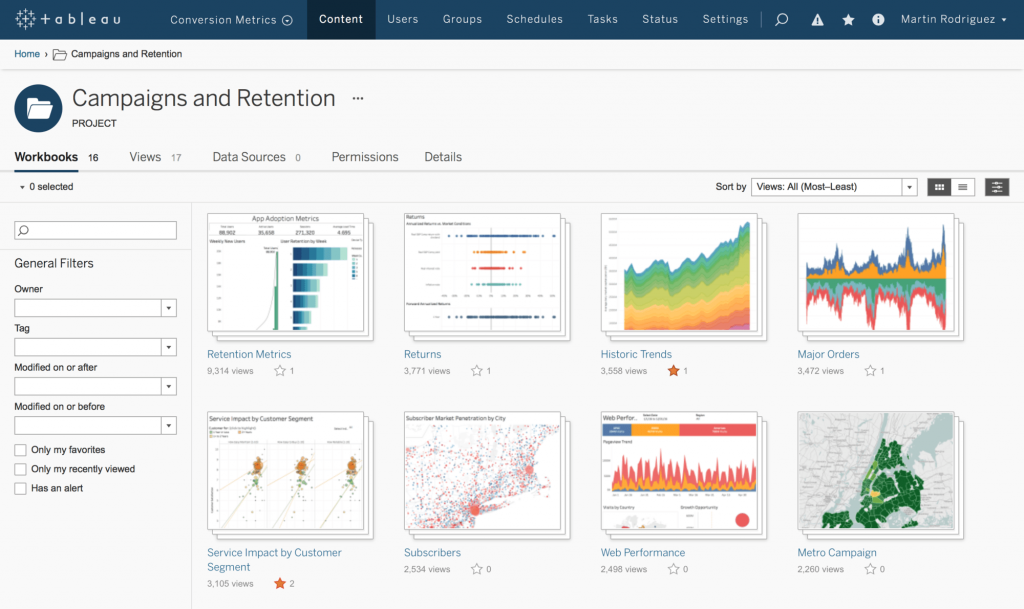

6. Tableau

Tableau has been known as the fastest-growing data visualization software since 2003. Founded by Christian Chabot, Andrew Beers, Pat Hanrahan, and Chris Stolte. This big data analytics tool aims at simplifying the raw data obtained in an understandable format. The eye-catching features of this program include real-time analysis, data collaboration, and data blending.

In its comparison with Excel, the following are the advantages of Tableau. First, it is suited for resolving big data issues and representing big data. Second, moderate performance speed to enhance and optimize operation progress.

Third, this big data analytics software is usable without any coding knowledge and is available at the cloud, server, and desktop versions. To conclude, it exceeds Excel in major fields like visualizations, interactive dashboards, and the capacity to work with data on a large scale.

Formatting tools need improvisations, and built-in deployment and migration could have made this big data analytics tool a better fit. All the editions have free trial options available. The paid versions start at $35 per user a month.

7. Cassandra

Apache Software Foundation made its stable release of Apache Cassandra in 2020, after its initial release in 2008. This big data analytics tool is open source, is distributed, and has a wide column store. It has a NoSQL database management system to handle data across commodity servers, and high availability with no failure point.

Cassandra has high scalability that allows attachment of more hardware for adding more data and customers into the community, as and when required. Having no single point of failure, this data analytic tool is advantageous to critical business applications—the ones who cannot afford to go through any technical failures whatsoever.

It provides fast linear-scale performance, facilitating the increase in the number of nodes that maintains response time. Cassandra is supportive of all sorts of data formats- structured, unstructured, or semi-structured. A standout feature of this tool is that it allows flexible data distribution for replicating data across multiple users.

8. Power BI

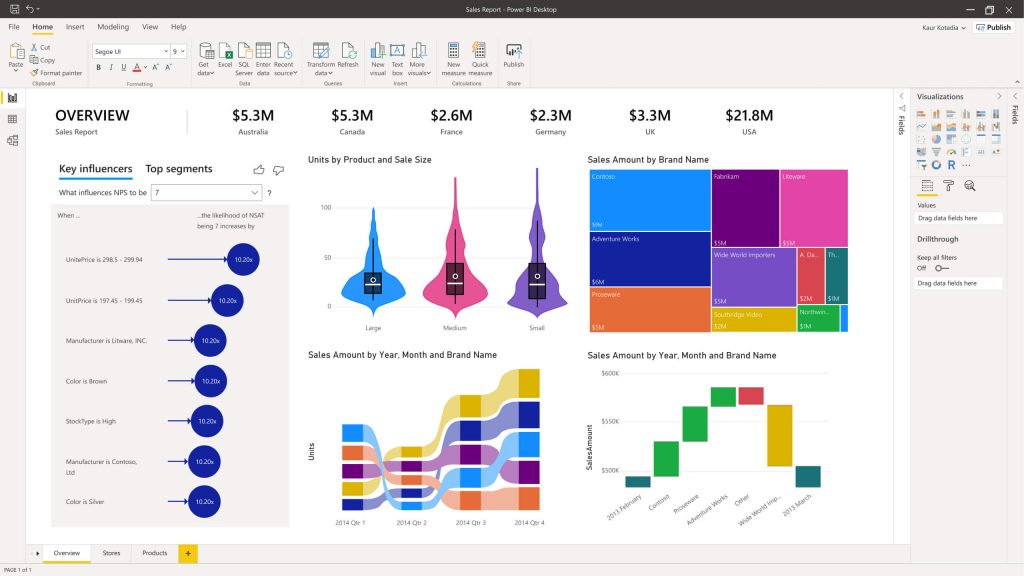

Developed by Microsoft Corporation, Power BI is a business analytics service, aimed at offering business intelligence capabilities. And, interactive visualizations with an interface for users to customize reports and dashboards.

It serves to ingest data from diverse sources. The big data sources are processed and converted to actionable insights. The products of this data analytics tool are Power BI Pro, Power BI Mobile, Power BI Desktop, Power BI Premium, Power BI Embedded, and Power BI Report Server.

These are suitable for BI and analytics needs. This data analytic software offers multilingual support such as Power Query, DAX, R, and Python, and SQL. It is integrated with over 100 on-premises and cloud-based data sources. Alongside it supports artificial intelligence, machine learning, stream analytics, and big data analytics.

Pre Built and customized visuals and workspace with row-level security make this tool one of the trusted data analytics tools in the market. This data analysis tool provides a free trial with a paid version beginning at $9.99 per user per month.

9. Microsoft HDInsight

Azure HDInsight was developed by Deepak Patil in 2010, as an art of the Microsoft family to lead Dell’s cloud business. This big data analytics tool has some stunning features. It has a fully manageable cluster service for Apache Hadoop and Spark, clustering within minutes, running, and deploying applications.

Besides, it detects any changes and repairs issues instantly, 24/7. Running at the most sensitive workloads times across thousands of cores and memory TBs, underneath the assurance of 99.9 per cent for the whole stack of software. As a result, data applications can run smoothly with issues being fixed.

HDInsight protects the most sensitive data assets of the enterprise. Using a full spectrum of technological intelligence, deployed in multiple government clouds and more than 26 public regions across the world. Recently, this tool has been available at half the original price. Microsoft HDInsight is worth trying out.

10. Skytree

Based in California, Skytree aimed at developing and publishing a machine learning platform for advanced data analytics. It empowers data analysts and researchers to create more advanced models faster and with greater ease.

This big data analytics tool has highly scalable algorithms and artificial intelligence. This allows data scientists to understand the logic behind machine learning models and decisions. Programmatic and GUI access, model interpretability, data preparation capabilities for robust predictive problems are some of the other features that need an absolute mention in this article.

Ways to Use Data Analytics

1. Improved decision making

The insights used to gain information can be used to inform company decisions, aiming at better outcomes. It reduces any sort of guesswork from choosing what content creation will occur, planning marketing campaigns, developing products, among others.

According to the changes happening every day, the companies can collect and analyze data, updating while going with the workflow. It provides a 360-degree view of the customers on the products and services. As a result, the customers are better understood, which shapes meeting the needs with greater ease.

2. More effective marketing

A better understanding of customer needs will allow more effective marketing. Data analytics allow companies to know how well their campaigns are doing. As a result, it allows for better tuning for desirable outcomes.

The big data tools gain insights into the audience segments that have the capability of best interaction with the campaigns. This information is used for adjusting the target criteria through automation, or for developing different messaging and creative segments.

3. Better customer service

The data collected reveals information about the preferences, their communications, interests and concerns. The data arranged in order, in a central place allows the sales and marketing team, as well as the customer service team to be on the same page.

Data analytics allow a better understanding of customer needs. This tailors the companies to suit the needs of the prospective customers and existing customers. This leads to a more efficient understanding and personalization and building stronger relationships with the customers.

4. Efficient operations

The data analytics software streamlines processes, saving money and boosting the bottom-line. Understanding what the customers want allows less time to be wasted on making content. And, advertisements that don’t match with the interests of the customers.

As a result, less wastage of money occurs. Besides, more concrete campaigns and content strategies are suggestable. It further boosts company revenue by increasing ad revenues, conversions, and subscriptions.

Technologies that are making data analytics more useful

1. Machine learning

It is basically a subdivision of artificial intelligence. Artificial intelligence imitates manual human intelligence with the help of developing computer systems. Machine learning uses algorithms that analyse data and predict the outcomes without explicit programming of the system.

2. Data management

It involves managing the data flow in and out of the system as well as keeping them organized. The central data management platform keeps all the high-quality data preserved for later use. The data management program ensures the data is arranged in a place that is organized and handled effectively.

3. Data mining

It is essential for sorting the data in terms of patterns and keeping them arranged based on the data points. It enables sifting through large amounts of data and keeping the relevant ones for further reference.

4. Predictive analytics

It analyses historical data to predict future outcomes. It uses statistical algorithms and machine learning for making accurate predictions regarding decisions made by the companies for moving forward and succeeding.

Concluding

The biggest challenge of data analytics is to collect data. It requires big data analytics tools to gather data from website visits, ad clicks, and deliver them in a usable format. Once collected, data needs to be integrated and stored offline and online from external and internal sources.

This article covers some of the most well known big data analytic programs based on the features, its pros, and cons. I hope this article is effective in choosing the right data analytic tool.